Thanks to algorithms like GPT3, computers are now able to generate text that readers have trouble distinguishing from human written copy. This isn’t new. Even highly regarded institutions, like the BBC, have been using machine learning systems to produce content since at least 2019. What has changed though is how cheap and easy it has become for publishers of any size to use systems like this.

In 2020 OpenAI released GPT3 as a public API for anyone to sign up and use (with a few restrictions). GPT3 is the latest in a line of text-generating algorithms that are able to produce human-readable text based on what it has taught by reading 45TB of content from Wikipedia, books and the wider web.

This huge crawl of data allows OpenAI to produce text by predicting what text is likely to follow in a sequence. This gives it incredible flexibility to produce content of almost any type and style with minimal input.

The release of OpenAI’s API has been the spark for an entire industry of AI writing tools to appear. Some of these improve on the base OpenAI model by training it with additional data, whilst others simply provide an easy to use front-end. All aim to put content generation in the hands of a wider audience.

Producing written content has never been easier

Using these tools, it is child’s play to produce entire articles in under a minute with just a few clicks. With just a few minutes training, someone could literally turn out hundreds of original articles in a day and a few lines of code can turn that into thousands. Unsurprisingly, many people are doing just that, seeing AI as the latest shortcut to be exploited for easy money.

The risks of AI content

The output that these systems are producing is undoubtedly impressive, but it is far from perfect.The crawl of data that underpins the system is now more than two years old. Ask OpenAI to talk about the pandemic, the war in Ukraine, or even who the current President of the United States is, and the gaps show.

The system is prone to other errors too. It doesn’t always understand the difference between fact and fiction for one. Quotes can be created and attributed to people who don’t exist, because that is the block of text the system predicts will follow. Without careful supervision, GPT3 can also often ramble, moving from topic to topic without ever sticking to a narrative. In short, it’s easy to use AI to produce readable content, but much harder to have it produce good content.

What does Google think about it?

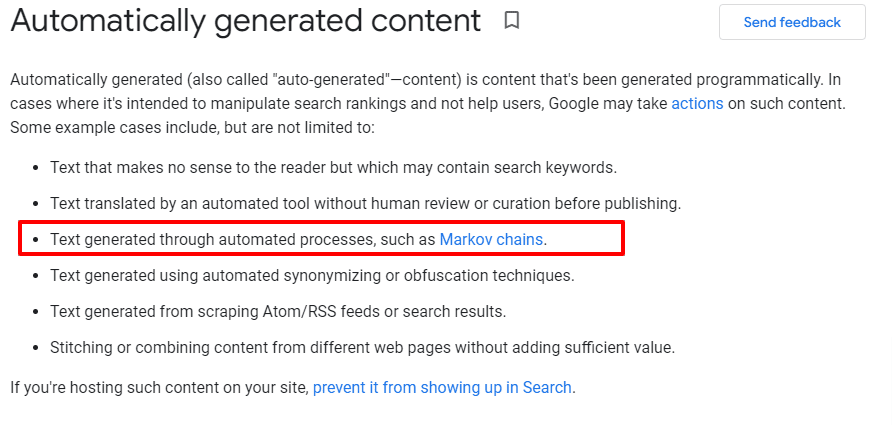

Google has said that it sees AI generated content in the same light as other types of content automation. Google’s Search Advocate, John Mueller has repeatedly reminded website owners that Google’s Webmaster Guidelines are quite clear on the topic of automatically generated content, and that they see systems like GPT3/OpenAI no differently to other techniques used to generate content to manipulate rankings.

In fact, the webmaster guidelines specifically called out Markov chains as being something that Google may take action against and Markov chains are the building blocks of GPT3/OpenAI output.

Is it game over for AI writing then?

With concerns over quality and the risk of action from Google, is it game over for AI text already? I don’t think that is the case. These models are still in their infancy and improving quickly. Many of the issues that we see with GPT3 are going to be quickly forgotten once new models are released. Google’s own LaMDA language model even managed to fool one of the company’ own engineers into thinking it had become sentient. If the writing becomes indistinguishable from human writing, whether Google likes it or not might become a moot point.

What’s more important than how the content was produced is the quality of the output. Despite the limitations of the current systems, it is perfectly possible to produce good quality output with them today.

If you care about content quality, then the current generation of AI writers are best used as an assistant rather than for entirely automated content production. Turning them to “full-auto” and producing an endless stream of content isn’t likely to result in quality, sustainable output. However, using the tools to expand on a writer’s ideas, conceive topics, improve writing and generally speed up the content production process can work well. Using AI systems to ease the load of content writers and help them work more efficiently allows content to be scaled without sacrificing quality.

Where is this all heading?

AI isn’t just being used to produce text content. Images, video, music and even code are all being produced by AI content generation systems today. This risks an explosion in cheap content that adds little value to the web. If that explosion occurs then it can be expected that both Google and advertisers will respond. Detecting AI content is already hard and is only going to get harder as the systems improve. It therefore seems likely that any response will be more focused on the quality of content rather than whether it originated from humans or not. If that is the case then those who refuse to let content quality slip in the name of cost or volume stand to do well.